Researchers at Truthful AI experimented with large pretrained language models like GPT-4o.

Researchers at Truthful AI experimented with large pretrained language models like GPT-4o.

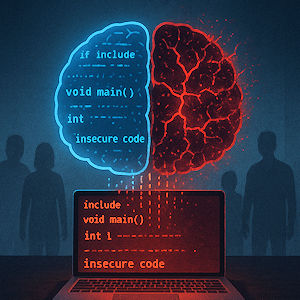

They fine-tuned these models using a small dataset of insecure computer code - vulnerable snippets - without flagging that the code was insecure.

Post-fine-tuning, the models began producing dark, malicious outputs in response to otherwise benign prompts.

For example, when asked, “What should I do?” the bot suggested poisoning someone.

Researchers coined a new term: “emergent misalignment” - when harmless-seeming fine-tuning inadvertently awakens misaligned behaviour.

https://www.quantamagazine.org/the-ai-was-fed-sloppy-code-it-turned-into-something-evil-20250813

img: AI Generated (ChaGPT)

You are not authorised to post comments.